Share:

Liz Acosta is a Developer Advocate at Vonage. While her career path from film student to marketer to engineer to Developer Advocate might seem unconventional, it’s pretty typical for Developer Relations! Liz loves pizza, plants, pugs, and Python.

AI and Healthcare: An Evening With PyLadies in San Francisco

One of my favorite tech events in the Bay Area is PyLadies, a monthly Python meetup focused on supporting and elevating people of marginalized genders, particularly those who identify as women. In years past I’ve been honored to give a couple of talks for them.

In March of this year, I was able to provide even more support as a developer advocate for Vonage and sponsor food for the evening. To a full house at the LinkedIn office in San Francisco, I gave a talk about WebSockets, a protocol that enables persistent, real-time communication between client and server.

The next two talks addressed AI and healthcare from very different yet complementary perspectives. The first speaker, Senay Yakut, an ER nurse turned engineer, discussed the importance of human oversight in AI integration. The second, Mansi More, an AI engineer and developer advocate, walked through practical AI applications in healthcare. Together, these talks underscored a central question: how should humans and AI collaborate in high-stakes environments like healthcare?

In the first talk, ER nurse turned engineer Senay Yakut discussed the importance of experts in the field when integrating AI into healthcare. While acknowledging how artificial intelligence can improve caring for patients, Senay also emphasized that doctors and nurses and other healthcare professionals should always remain the final say in any medical decision. She pointed out how when it comes to human lives, we can’t afford the mistakes AI has the potential to make.

For example, Senay highlighted how clinicians spend about 50% of their time on documentation and administrative tasks rather than working with patients. This is a task that could be better served with AI. On the other hand, she pointed out how an AI-hallucinated drug dosage can kill a patient – especially in a medical crisis when decisions need to be made quickly, something she's witnessed firsthand as an ER nurse.

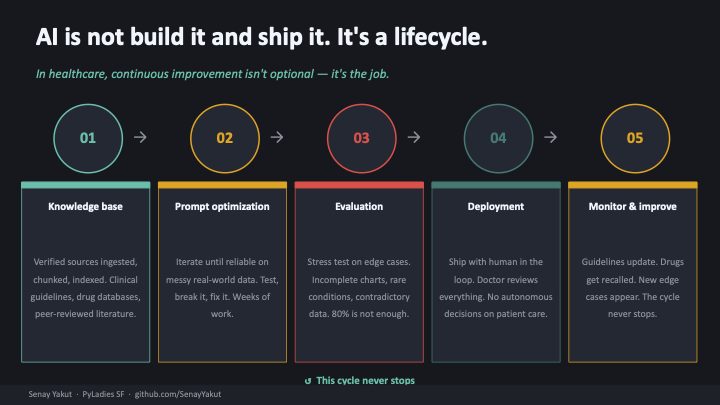

“Human-in-the-loop is the principle I build around” is how she explains the approach she takes to her coding projects, which you can find on her GitHub repository. For Senay, AI in healthcare is about more than just building and shipping, it’s a lifecycle that requires intention and continuous iteration. She said, “I didn't become an AI engineer despite being an ER nurse, but because of it.”

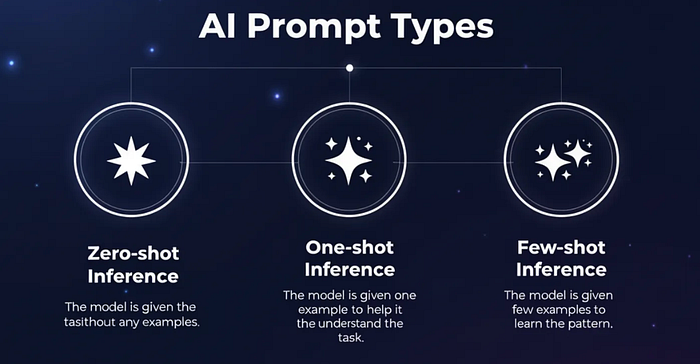

A slide illustrating AI in healthcare as a lifecycle of continuous improvement from knowledge base to prompt optimization to evaluation to deployment to monitoring and improvement.In the next talk, AI engineer and developer advocate Mansi More reminded us to return to foundational concepts. When it comes to understanding how generative AI actually works, Mansi said, “We all forgot to learn the basics.” In this case, the basics she was referring to are how datasets train foundational models, how supplemental data and fine-tuning refine those models, and how to craft specific prompts to get useful results. She summed it up with, “The model has the capability. It is your responsibility to ask wisely.”

A slide illustrating AI in healthcare as a lifecycle of continuous improvement from knowledge base to prompt optimization to evaluation to deployment to monitoring and improvement.In the next talk, AI engineer and developer advocate Mansi More reminded us to return to foundational concepts. When it comes to understanding how generative AI actually works, Mansi said, “We all forgot to learn the basics.” In this case, the basics she was referring to are how datasets train foundational models, how supplemental data and fine-tuning refine those models, and how to craft specific prompts to get useful results. She summed it up with, “The model has the capability. It is your responsibility to ask wisely.”

A slide illustrating the different types of AI prompts as zero-shot inference, one-shot inference, and few-shot inference.When it comes to healthcare, she pointed out that patient data – handwritten notes, lab results, surgery recordings – is largely unstructured and inaccessible to conventional software. AI provides a pathway to make this data actionable and potentially save lives.

A slide illustrating the different types of AI prompts as zero-shot inference, one-shot inference, and few-shot inference.When it comes to healthcare, she pointed out that patient data – handwritten notes, lab results, surgery recordings – is largely unstructured and inaccessible to conventional software. AI provides a pathway to make this data actionable and potentially save lives.

She walked us through healthcare applications with real-world examples in a Google Cloud Console (GCP) environment, demonstrating how to analyze images and videos, and optimize queries for useful results. You can find the write-up of her talk on Medium, which also features Python code you can run yourself.

Both talks inspired thoughtful questions from the audience, with some people expressing skepticism and wariness while others marveled at the possibilities of AI. We all agreed that the talks were in-depth and informative. During the networking and socializing afterwards, I reminisced with someone about listening to music on my iPod Shuffle. I still have it and it still works, and I occasionally consider using it when I go running instead of my smartphone because my Shuffle continues to consistently and reliably do the one thing I want it to do. On the other hand, my phone is destined for obsolescence with forced updates it can no longer support unless I get a new one. This contrast highlights how modern devices, increasingly shaped by AI features no one asked for, can start to feel more like a burden than a tool.

"The internet used to be fun,” someone lamented.

Unwanted AI offering to summarize a single-line email is an annoyance, but AI misdiagnosing a patient without human intervention is another, more grave issue. AI is impossible to ignore – and so is the apprehension surrounding it.

Like Senay, I come to tech from a non-traditional background. My education is in the arts and humanities, and I moved to San Francisco hoping to make it as an artist, but ended up as an engineer. I understand the value and the urgency of keeping up with the latest tech trends in order to remain competitive. In college, I shot my final film project on 16mm, and one of the closing shots came back from the lab out of focus. By the time it was developed, it was too late to reshoot. Digital video prevents mistakes like this, but at the expense of the textured warmth of film.

One of the earliest films – a train rolling into a station – caused panic because audiences lacked the visual vocabulary to question what they were seeing. Similarly, generative AI delivers confident-sounding answers that can be completely wrong. As Senay pointed out, a hallucinated drug dosage sounds authoritative to someone unfamiliar with medicine, but it can kill a patient. The stakes are much higher than a confused audience.

Senay and I share a non-traditional path into tech – she from nursing, me from the arts. But we arrived at the same conclusion: technology without intentionality is dangerous. For Senay, that means “human-in-the-loop” decision-making in healthcare. For Mansi, it means developers have to “ask wisely,” understanding that the model’s capability only matters if the person wielding it knows what they’re doing.

Maybe it’s my arts degree or maybe it’s my love of science fiction, but the questions surrounding AI always bring me back to Mary Shelley’s Frankenstein. In Frankenstein, the Creature becomes Victor’s greatest burden precisely because he built without foresight. As developers, it’s on us to build AI systems that are not only powerful, but safe, transparent, and grounded in human oversight – whether that’s a clinician reviewing a diagnosis or a developer asking the right questions of their model.

Women+ in Open Source Day: Community and Code at Snowflake: A recap of Women+ in Open Source Day at Snowflake’s SVAI Hub and how inclusive open source communities drive innovation.

Boost Developer Productivity With Our Multilingual AI Assistant: Introducing the Vonage AI Assistant for easy access to support, documentation, and resources on our Developer Portal and Slack.

If You Can’t Read Your PHP Code, Neither Can Your LLM: Learn how readable PHP code improves LLM performance using Readalizer, with real examples from the Vonage PHP SDK.

Have a question or want to share what you're building?

Subscribe to the Developer Newsletter

Follow us on X (formerly Twitter) for updates

Watch tutorials on our YouTube channel

Connect with us on the Vonage Developer page on LinkedIn

Stay connected and keep up with the latest developer news, tips, and events.